A $13.5K Open-Source Humanoid Robot: Inside Unitree G1's AI Stack

Unitree ships a humanoid robot with 43 degrees of freedom, a full AI training pipeline on GitHub, and Apple Vision Pro teleoperation — for $13.5K. Here's what the developer ecosystem looks like.

I watched a documentary about China’s rise — “Comment la Chine est devenue imbattable?” by Génération Do It Yourself — and one thing stuck with me: China’s innovation is culture-driven. Not just cheap labor. Not just scale. Culture. That lens led me to Unitree Robotics, and what I found blew me away.

The Problem

Humanoid robotics has been locked behind two walls: price and openness. Boston Dynamics’ Atlas costs millions and ships zero source code. Tesla’s Optimus is vaporware for developers. If you’re a builder who wants to experiment with a real humanoid — train locomotion policies, test manipulation tasks, run your own AI models — there’s been nothing accessible.

Meanwhile, a company in Hangzhou has been quietly shipping the opposite: an affordable humanoid robot with its entire AI stack open-sourced on GitHub. 43 repositories. Full sim-to-real pipeline. VLA models. Apple Vision Pro teleoperation. And a starting price of $13,500.

The Solution

The Unitree G1 is a 1.32m, 35kg humanoid robot with up to 43 degrees of freedom, available in two variants:

| Spec | G1 Standard ($13.5K) | G1 EDU (Contact Sales) |

|---|---|---|

| DOF | 23 | 23-43 (configurable) |

| Compute | 8-core CPU | 8-core + NVIDIA Jetson Orin |

| Sensors | Depth cam, 3D LiDAR, 4-mic array | Same + extended |

| Arm Load | ~2kg | ~3kg |

| Knee Torque | 90 N.m | 120 N.m |

| Hands | Optional Dex3-1 (7 DOF) | Optional |

| Battery | ~2h | ~2h |

| Connectivity | WiFi 6, Bluetooth 5.2 | Same |

But the hardware is only half the story. The real disruption is the software ecosystem.

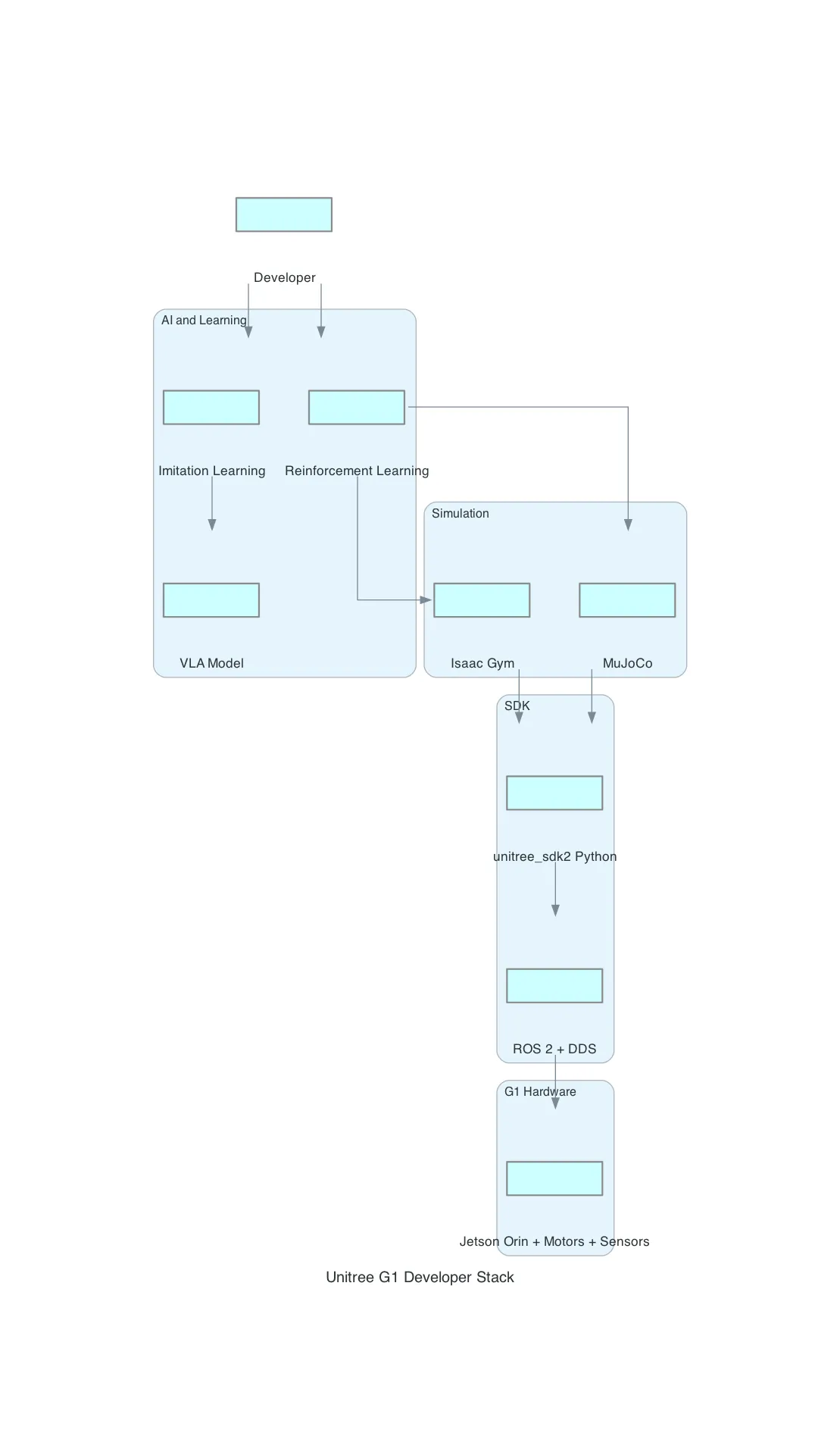

How It Works

Layer 1: The SDK — Direct Robot Control

unitree_sdk2_python is the entry point. It communicates with the robot over DDS (Data Distribution Service) and gives you two levels of control:

High-level: Sport modes — walking, standing, velocity control, attitude adjustment, trajectory tracking. You send commands; the robot’s built-in controller handles balance and gait.

Low-level: Direct joint motor control — set kp, kd, and torque for each of the 23-43 joints individually. This is where custom locomotion policies run.

# High-level: make the robot walk forward

sport_client.Move(0.5, 0.0, 0.0) # vx, vy, vyaw

# Low-level: control individual joint

motor_cmd.q = target_position

motor_cmd.kp = 50.0

motor_cmd.kd = 3.0

motor_cmd.tau = 0.0Requirements: Python 3.8+, cyclonedds, numpy, opencv. The SDK also has a C++ version for performance-critical applications and integrates with ROS 2 via unitree_ros2.

Layer 2: Simulation — Train Before You Walk

You don’t start on real hardware. Unitree provides three simulation environments:

NVIDIA Isaac Gym (unitree_rl_gym) — GPU-accelerated parallel training for reinforcement learning. Thousands of G1 instances learning to walk simultaneously. The pipeline is explicit: Train → Play → Sim2Sim → Sim2Real.

MuJoCo (unitree_mujoco) — Physics simulation with terrain generation. Good for validation and testing outside the NVIDIA ecosystem. Supports both C++ and Python.

Isaac Lab (unitree_sim_isaaclab) — NVIDIA’s newer simulation framework with task-specific environments for the G1.

The sim-to-real transfer is the critical piece. Policies trained in simulation transfer to the physical robot. Unitree provides the URDF models, tuned simulation parameters, and deployment scripts to make this work.

Layer 3: AI Models — From RL to Vision-Language-Action

This is where it gets serious. Unitree doesn’t just give you a robot and an SDK. They give you the full AI research stack.

Reinforcement Learning (unitree_rl_gym, unitree_rl_lab) — Train locomotion policies using Isaac Gym. The G1 learns to walk, turn, handle terrain, and recover from perturbations through millions of simulated episodes.

Imitation Learning (unitree_IL_lerobot) — This one is fascinating. They’ve adapted Hugging Face’s LeRobot framework for the G1 with dual-arm dexterous hands. You teleoperate the robot (collect demonstrations), then train policies using ACT, Diffusion Policy, or Pi0 models. A pre-built dataset — “G1_Dex3_ToastedBread_Dataset” — is available on Hugging Face for immediate experimentation.

Vision-Language-Action (unifolm-vla) — The crown jewel. UnifoLM VLA-0 takes a vision-language foundation model and fine-tunes it on robot manipulation data. The result: you give the G1 a natural language instruction (“fold the towel”), and it translates vision + language understanding into motor actions. 12 categories of complex manipulation tasks with a single policy. Stacking blocks, pouring liquid, folding towels, packing boxes, wiping surfaces, and more.

World Model (unifolm-world-model-action) — A world-model-action architecture that spans multiple robotic embodiments. This is the foundation for generalized robot intelligence — not just task-specific policies.

Layer 4: Teleoperation — Your Body as the Controller

xr_teleoperate lets you control the G1 in real-time using:

- Apple Vision Pro — hand tracking, immersive VR view through the robot’s cameras

- Meta Quest 3 — controller-based input

- PICO 4 — same capabilities

The operator wears the headset, sees through the robot’s eyes via WebRTC streaming, and controls the arms and dexterous hands with natural hand gestures. This isn’t just for fun — it’s the data collection pipeline for imitation learning. Every teleoperation session generates training data that can feed directly into the LeRobot framework.

The Full Pipeline

Put it all together and you get a complete development cycle:

- Simulate — Train locomotion in Isaac Gym (millions of episodes, GPU-accelerated)

- Transfer — Deploy trained policy to real G1 via Sim2Real

- Teleoperate — Use Apple Vision Pro to demonstrate manipulation tasks

- Learn — Feed demonstrations into LeRobot for imitation learning

- Scale — Fine-tune VLA model for natural language instruction following

- Deploy — Run inference on Jetson Orin (EDU version) for autonomous operation

This is not a toy. This is a production robotics AI development platform sold for the price of a used car.

What I Learned

-

China’s robotics innovation is culture-driven, not cost-driven. The $13.5K price point gets attention, but the real story is the 43 open-source repositories. Unitree’s approach — ship the hardware cheap, open-source the entire AI stack, build a developer ecosystem — is a strategic choice rooted in a culture that values rapid iteration and ecosystem building over IP protection. The documentary was right.

-

The VLA model changes the game. Vision-Language-Action means you can instruct a robot in natural language and it figures out the motor commands. Unitree’s UnifoLM VLA-0 handles 12 complex manipulation tasks with a single policy. We’re past the era of programming robots — we’re entering the era of prompting them.

-

Apple Vision Pro found its killer app. Forget spatial computing for productivity. Teleoperation of humanoid robots — seeing through their eyes, controlling their hands with yours — is the use case that justifies the hardware. And it doubles as a data collection tool for training AI models. Brilliant.

-

The Sim2Real pipeline is mature. The gap between simulation and reality has been the graveyard of robotics research for decades. Unitree ships a working pipeline: Isaac Gym → MuJoCo validation → real robot. With their tuned URDF models and deployment scripts, the transfer actually works.

-

Europe has a question to answer. Unitree ships a $13.5K humanoid with 43 open-source repos. In Europe, we’re still debating AI regulation frameworks. The question isn’t whether humanoid robots will be part of daily life — it’s whether European builders will participate in shaping that future or just consume it.

Do It Yourself

Key takeaways:

- Open-source beats closed-source for rapid iteration. Unitree’s 43 GitHub repos mean you can start training locomotion policies in Isaac Gym today — no waiting for vendor SDKs or paying for closed APIs. The hardware is $13.5K, but the software stack is free and battle-tested.

- Sim-to-real transfer works, but you need the right tools. The pipeline is explicit: train in Isaac Gym → validate in MuJoCo → deploy to real hardware. Unitree provides the URDF models and deployment scripts to make this work, but expect iteration to tune simulation parameters.

- VLA models change what “programming a robot” means. You’re not writing motion planners anymore — you’re prompting a model with natural language (“fold the towel”) and letting it figure out the motor commands. The 12-task policy is just the starting point; the architecture generalizes.

Try it now:

- Explore the AI stack without hardware: Clone the

unitree_rl_gymrepo and run the G1 locomotion training in Isaac Gym (requires NVIDIA GPU). The README has a one-command Docker setup. - See the VLA model in action: Check out the

unifolm-vlarepository for the vision-language-action model. The paper and demo videos show the 12 manipulation tasks. Download the pre-trained weights from Hugging Face and run inference locally. - Try teleoperation simulation: If you have an Apple Vision Pro or Meta Quest 3, explore the

xr_teleoperaterepo. It includes a simulation mode where you can control a virtual G1 before touching real hardware — this is the same interface used for data collection in the imitation learning pipeline.

Never miss a post

Get notified when I publish new articles about AI, Cloud, and AWS.

No spam, unsubscribe anytime.

Comments

Sign in to leave a comment

Related Posts

OpenClaw vs NanoBot vs PicoClaw vs TinyClaw: Four Approaches to Self-Hosted AI Assistants

A deep architectural comparison of four open-source frameworks that turn messaging apps into AI assistant interfaces — from a 349-file TypeScript monolith to a 10MB Go binary that runs on a $10 board.

World Monitor: How Open-Source OSINT Is Democratizing Global Intelligence

A deep dive into World Monitor — an open-source intelligence dashboard that aggregates 150+ feeds, 40+ geospatial layers, and AI-powered analysis into a real-time situational awareness platform. What OSINT is, how these platforms work under the hood, and why it matters now more than ever.

TFLOPS: The GPU Metric Every AI Engineer Should Understand

What TFLOPS actually measures, why FP16 matters for LLMs, and why the most important GPU bottleneck for inference isn't compute at all.